The best marketing ideas come from marketers who live it.

That’s what this newsletter delivers.

The Marketing Millennials is a look inside what’s working right now for other marketers. No theory. No fluff. Just real insights and ideas you can actually use—from marketers who’ve been there, done that, and are sharing the playbook.

Every newsletter is written by Daniel Murray, a marketer obsessed with what goes into great marketing. Expect fresh takes, hot topics, and the kind of stuff you’ll want to steal for your next campaign.

Because marketing shouldn’t feel like guesswork. And you shouldn’t have to dig for the good stuff.

My Tuesday looked nothing like any other Tuesday I've had

I'm working on a consulting engagement for a large country office in the international development space.

The kind of work where you're getting dozens of documents and spreadsheets from the client, spending hours on calls digging into their work challenges and opportunities, looking at transactional and budget data, and trying to piece it all together into something clear, defensible, and actionable.

I have ten separate recommendations. Each one pulls data from different spreadsheets, call transcripts, memos, etc., and each requires a different analysis and its own detailed write-up with numbers, scenarios, and projected savings.

This is normally weeks of work. Even if I blocked out full days for it, I'd be chipping away at one recommendation at a time, slowly building the picture.

Especially since most of these aren't all areas where I have deep expertise. We're talking fleet management across dozens of vehicles, thousands of taxi trips worth of data, warehouse consolidation scenarios across multiple facilities, and staffing affordability projections over four years. The kind of number-heavy analysis that takes real time to do well.

This week, instead of doing all that myself, I had my top employee Claude do it.

But not just one Claude. I essentially had 3-5 Claudes working at any given time.

It felt next level.

I built the pattern once, then cloned it

Here's what I actually did.

I started with the first recommendation and worked through it with one Claude Cowork session.

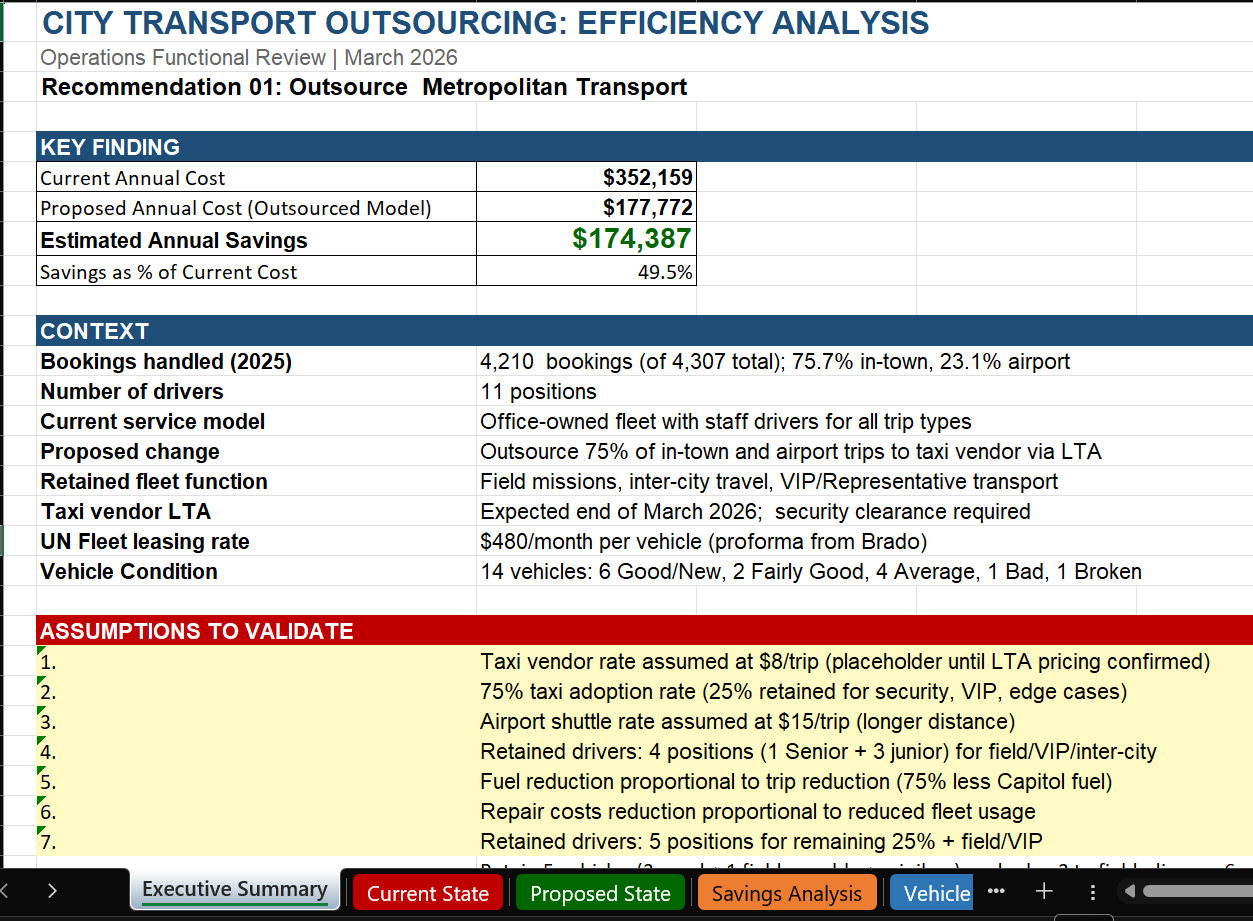

I gave it the data, told it what I wanted the analysis to look like, and we went back and forth until the output was solid: a detailed spreadsheet with the data analysis, projected savings, implementation scenarios, the whole thing:

Claude built this whole spreadsheet, color-coding, formulas and all

Then I did something that made everything else easier.

I had Claude update the project's instruction file (a CLAUDE.md that every session reads on startup) with the structure and format we'd just created.

That way, when I opened new sessions for the other recommendations, each one already knew what the output should look like.

So I'd open a new session, point it at the right data, tell it which recommendation to tackle, and it would follow the same pattern.

One session was building out a fleet rationalization analysis. Another was crunching warehouse costs across five locations and modeling consolidation scenarios. Another was calculating how many staff hours could be recovered through business process improvements.

Each one produced detailed spreadsheets and formatted write-ups. I'm talking real analysis here:

One session identified that a warehouse facility was processing 59% of the weight and volume but only 16% of total transactions.

Another found over 1,500 recoverable staff hours per year through process improvements.

These aren't surface-level summaries. They're the kind of findings that have a real impact on a client’s operations.

And my job became reviewing the output and checking that the numbers made sense, that the assumptions were reasonable, that the data was being pulled correctly.

Validating, not creating. Directing the analysis, not doing it.

Checking the numbers is the job now

I want to be clear about something.

This isn't a story about AI doing my work while I put my feet up. The checking is real work, and it matters.

When an AI session hands you a spreadsheet with projected savings of $X million per year across 10 scenarios, or that the office can cut Y number of posts, you better believe I'm going to verify those calculations. It’s my reputation on the line, and people’s jobs in the crosshairs, so I have to check that it all adds up.

I think about it like being a team lead. If you had a smart analyst on your team, you'd send them out to do the detail work.

But you'd still do spot checks. You'd poke at the numbers. You'd ask "where did this come from?" and "does this actually make sense?" That's exactly what this is.

And honestly, there's a healthy nervousness that comes with it. I don't want to submit something to a client that has gaps or wrong numbers because I trusted the AI too much. That nervousness keeps me sharp.

Maybe it'll always be necessary. Maybe it should be.

But here's what's different. Even with all that validation work, I completed deep analysis across ten areas in a matter of days. Not weeks. Not months.

And I genuinely don't think I could have done a better job with more time. The AI could look at more data points, consider more scenarios, and produce more thorough analysis than I could have done manually, even if I had unlimited time.

Because it's not just about speed. It's about the scope of what's possible for one person to actually process.

We’ve come a long way in a short time

About a year ago, I tried setting up an AI agent on a tool called Lindy AI. It had a big promise, but it was really more of a workflow automation tool with some AI pieces. Think more like Make or n8n.

Just getting it to review some transcripts and draft follow-up emails took weeks to set up, and it never really worked.

It wasn't anywhere close to this level of autonomous analysis. (To be fair, I’m sure Lindy has come a long way since then.)

Now I'm just talking to AI in plain language, pointing it at data, and getting back work product that I'm proud to present to a client.

That's what AI happens when you use AI in a structured, systematic way, with clear guidance and guardrails, and a healthy dose of skepticism.

Dig Deeper

Hey there! Nice to see you down this far. Before you go…

Did this one land?"

Were you forwarded this email? Click here to subscribe.